Embedding search now active on this very blog:

Embeddings

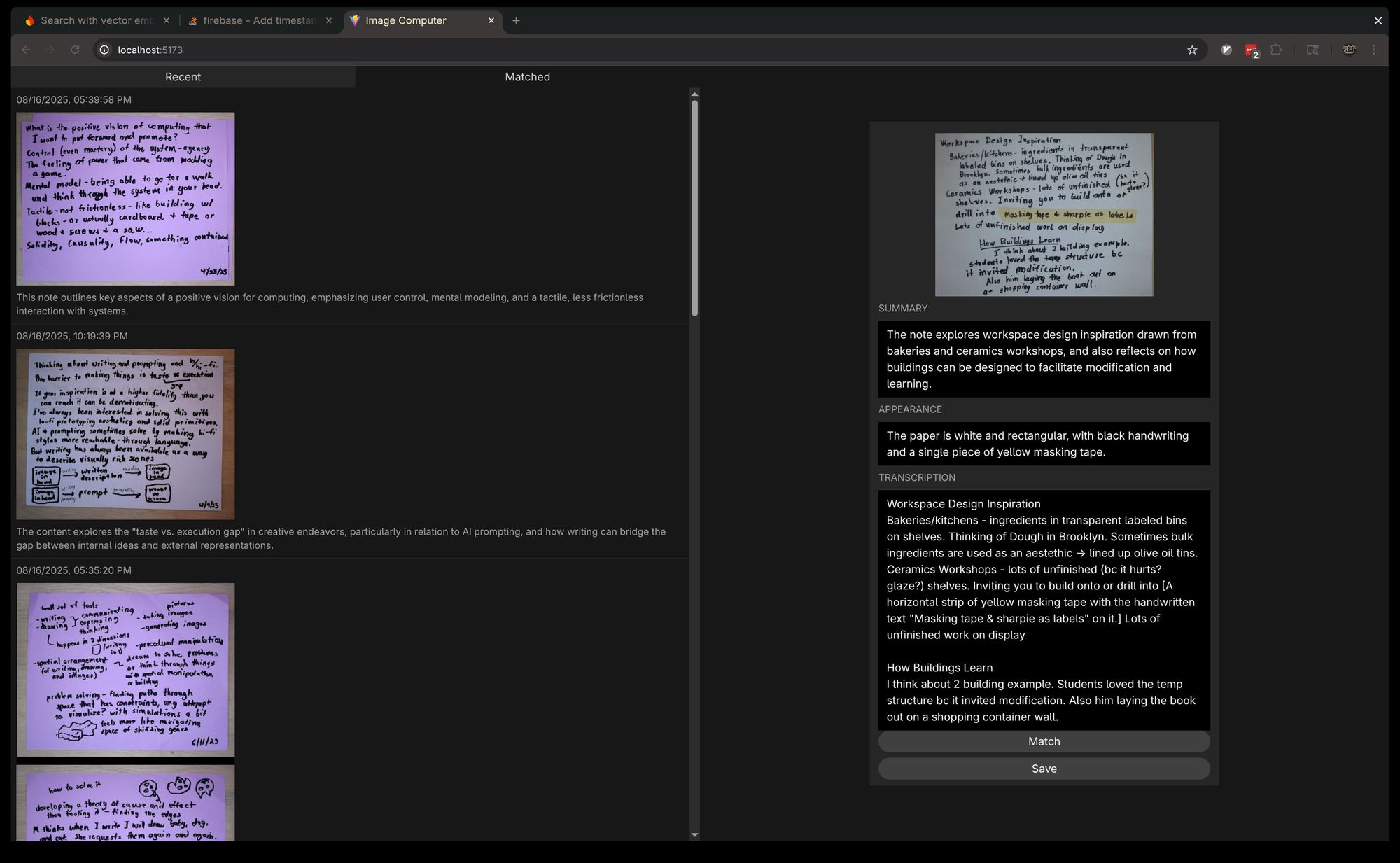

I got the basics of vector embedding retrieval working for my tabletop notes. Thinking about a more intensely minimal interface that really is image only.

Spatial apps for exploring ideas

Embeddings raise the possibility of exploring ideas spatially. The central challenge is how to balance between automatically laying out ideas semantically and allowing the user to group or cluster themselves.

User movement mirrors the experience of the best physical brainstorming - cutting up pieces and rearranging, examining, rearranging again.

Embeddings promise automatic clustering and hopefully surfacing new connections.

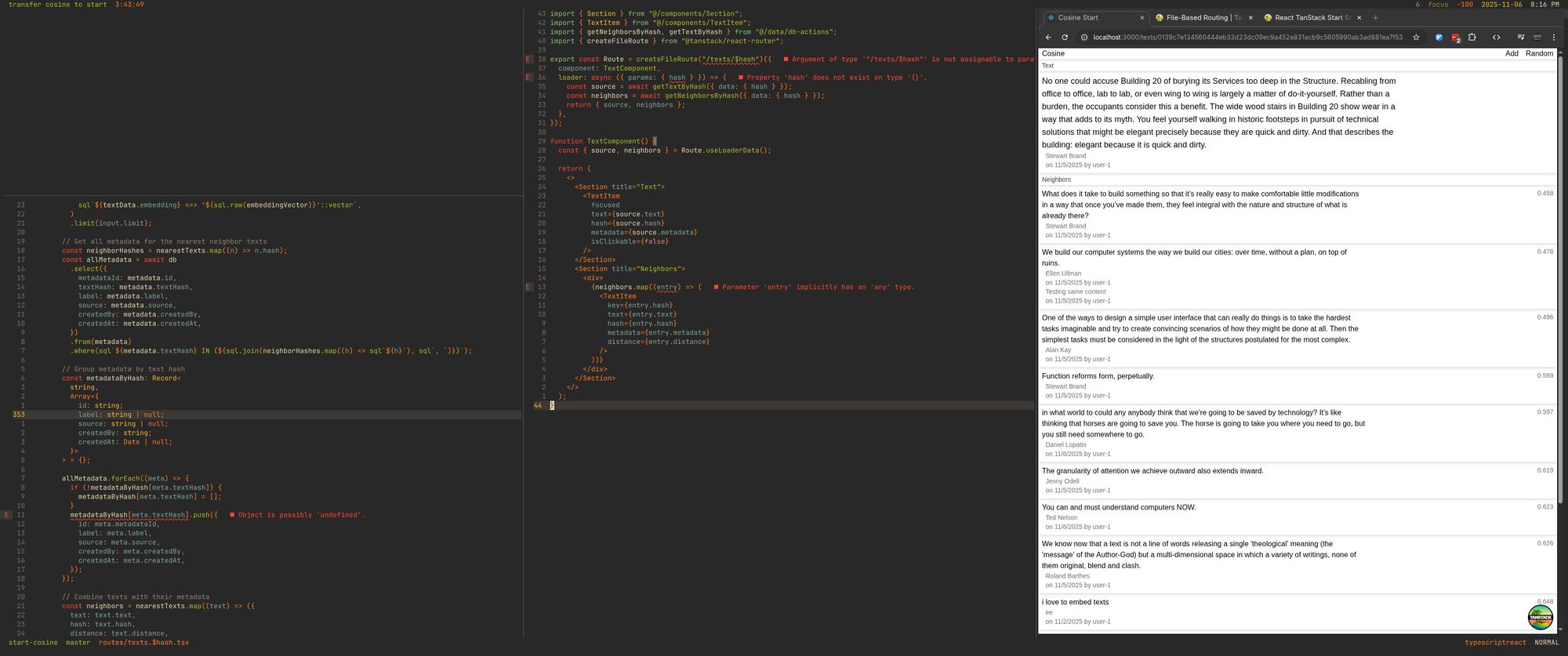

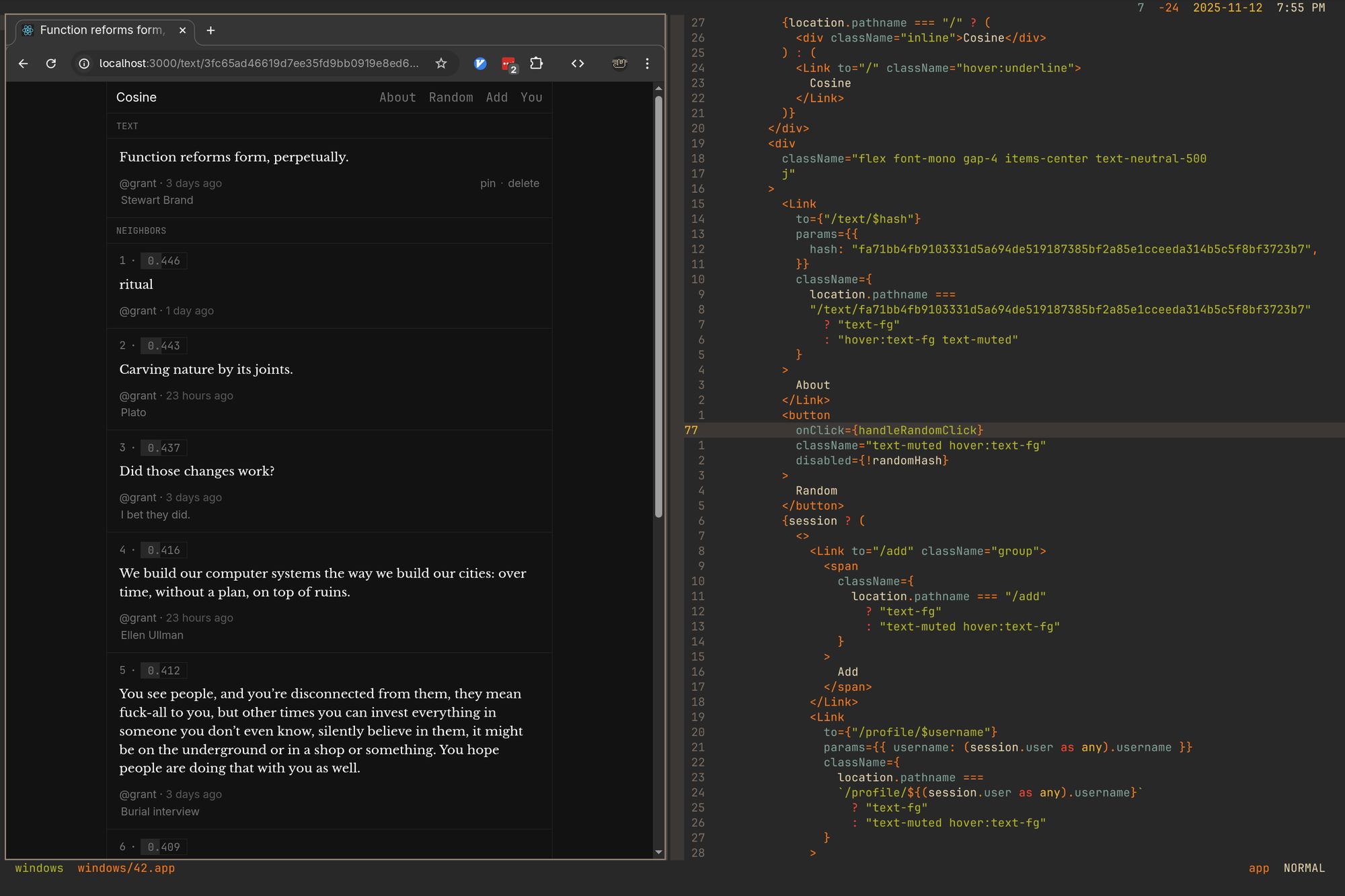

Cosine

New on Constraint Systems:

Cosine - embed and hash any text and see its nearest neighbors

https://cosine.constraint.systems

I made a GIF of running UMAP on the digits dataset, adding one digit into the dataset and retraining each frame. This was probably a weird thing to do but it is helping me to develop more of an intuition of what it's preserving (nearest neighbors) and what is not informative (overall positioning/scale).

Cosine WIP

Working on a stripped down "embed anything and see its neighbors" prototype.

Need to sort out some type errors though

Need to sort out some type errors though

You can see how UMAP clusters are structured by things like the orientation of the number if you travel along their axes.

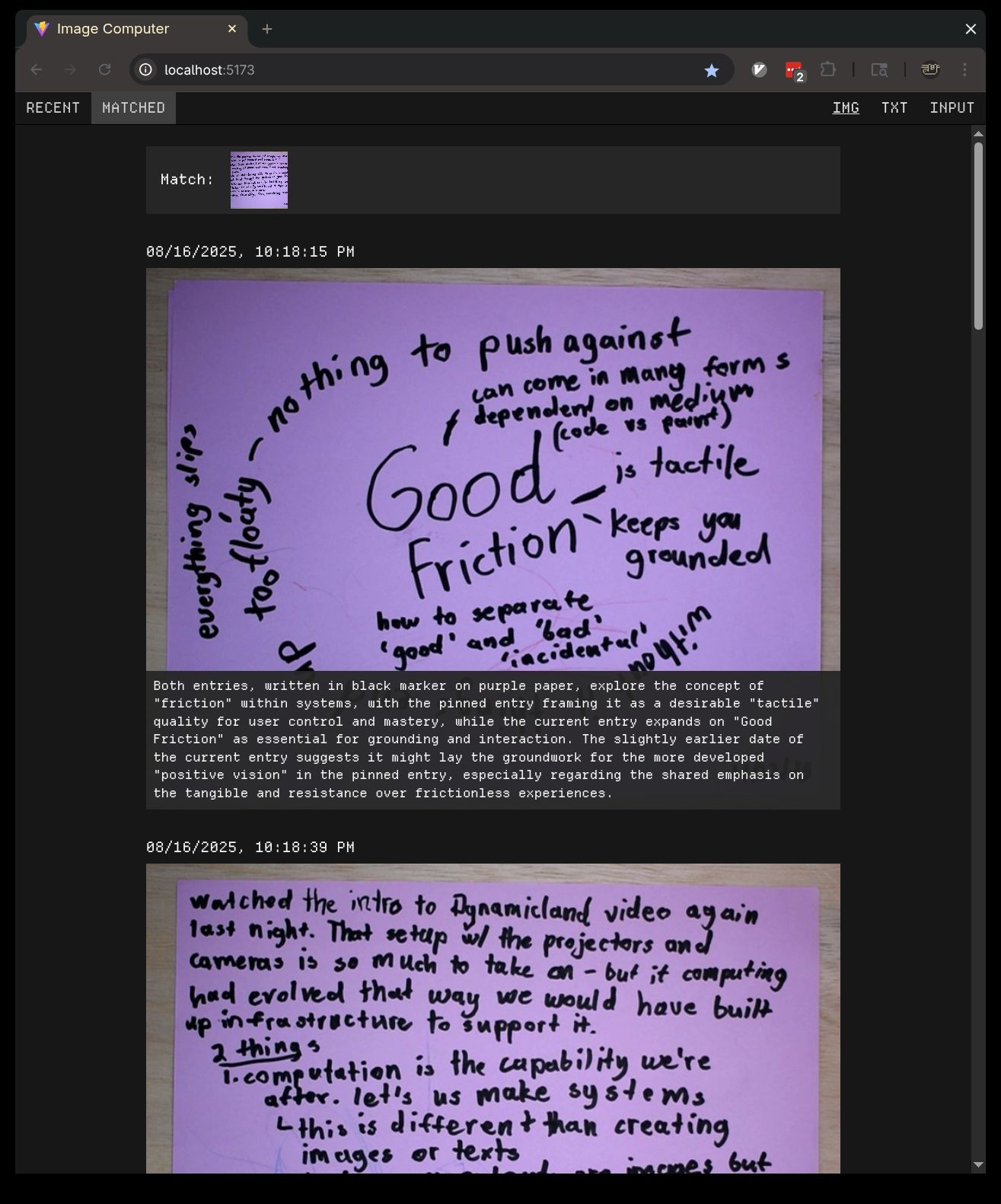

Matching and connecting

Promising result from ranking my handwritten notes using embeddings and then prompting for connections to the top result. Need to prompt it into less clumsy language though.

Working with embeddings - particularly with the goal of aiding thinking - a lot of the challenge is where do you draw the line for chunks. Paragraph-by-paragraph? Essay-length? Book-length? read on scrawl

Inspiration

Some folks at Tallinn University built a dataset visualizer based on some of my UMAP visualization demos. Very cool to see! From: https://collection-space-navigator.github.io/CSN/

Embeddings WIP

Getting pretty close with the embeddings app.

Article embedding visualization From: https://twitter.com/ocuatrecasas/status/1667717542784147456

Inspiration

Through desperate googling I rediscovered this great post and project on using three.js for T-SNE visualization.

From: https://douglasduhaime.com/posts/visualizing-tsne-maps-with-three-js.html

Book library experiments

Collecting some recordings of recent experiments. Taking photos of my books, using Gemini image edit generation to isolate those books and then removing their backgrounds, embedding generated summaries of those books and exploring them across various layouts.

Spines and covers

I wrote an entry for our newsletter in my experimental notecard-based writing app. It worked pretty well. Writing is unfortunately still hard.

Inspiration

SpaceSheet - interactive latent space exploration through a spreadsheet interface

Book to music experiment

Book-to-soundtrack experiment - a projector setup where I send the book image off to Gemini for recommendations and then play with Spotify. Meant to be kind of like putting on a record but for objects. (I clipped out the loading times.)

I added T-SNE and UMAP with min_dist=0.8 algorithm options to the UMAP explorer. Three.js & tween.js animating the transition of 70,000 points no problem.